Lies, Damned Lies, Statistics and Benchmarks

Another nice batch of instructions implemented in the recent update. This is an important milestone: most of the instructions are JIT compiled now, that are used in the Mandelbrot test. Why is it important?

Because finally we can do some benchmarking!

|

| Source: http://wumocomicstrip.com/2012/04/16 |

The update

So, the changes in this update are:

- Implementation of

ADD.x #imm,Dy,

ADD.x regy,Dz,

ADDA.x regy,Az,

ADDI.x #immq,Dy,

AND.x #imm,Dy,

ANDI.x #immq,Dy,

ASL.x #imm,Dy,

ASR.x #imm,Dy,

BTST.L #imm,Dx,

CMP.x #imm,Dy/Ay,

CMPA.W #imm,Ay,

CMPI.x #immq,Dy,

EOR.x #imm,Dy,

EORI.x #immq,Dy,

LSR.x #imm,Dy,

MULS.W Dx,Dy,

MULU.W Dx,Dy,

ORI.x #immq,Dy,

ROL.x #imm,Dy,

SUB.x regy,Dz,

TST.x reg instructions. - Fixed OR.x reg,reg flag dependency.

- Recycling of mapped temporary registers is implemented.

- Added the possibility of inverting C flag after extracting.

- CMP.L #imm,Ax and CMP.W #imm,Ax were removed from the table, there are no such instructions.

Phew, that was a nice long list.

Recycling of the temporary registers

While I was running amok on implementing the selected instructions for the Mandelbrot test, I had to face the inevitable: the shortage of the temporary registers. When I implemented the register allocation subsystem I tried to postpone the fix for this problem, but since a lot more instructions are implemented the consistent translated chunks are getting longer. Suddenly, I got the error message that I wrote a couple months ago:

“Error: JIT compiler ran out of free temporary registers”

Probably this error needs some explanation. Let’s start with how the registers are handled in the JIT engine:

In Petunia the emulated 68k registers are statically mapped to PowerPC registers. This approach has its benefits, like constructing the code that handles the emulated registers is much more easy. But also has some major issues, especially because all registers must be up-to-date at anytime. Whenever the execution is leaving the compiled code all registers must be saved and restored to the previous state. Needless to say how long that takes: more than 20 registers must be saved.

In E-UAE this would be a no-go. The compiled code consists of smaller contiguous chunks and the support functions from the environment must be called quite often. The rest of the emulator was written in C and it expects a certain state of the registers at a time (which is described in details in the PowerPC SysV ABI document).

How to overcome of these issues? First, I realized that there is no point in keeping all the contents of the emulated registers in real registers all the time. The usage of certain emulated registers are localized in a certain range of the code that consists of closely tied instructions mostly.

Then I figured out from the SysV ABI that I can safely use a range of registers that needs no saving/restoring and also I can make use of the non-volatile registers that must be restored, because other called functions must restore that too.

I ended up implementing a dynamically allocated register serving system that can handle the needs of the compiling.

There are two types of registers: the temporary registers that are used in one instruction only for a specific role and the mapped 68k registers that are loaded once and kept until the registers must be released because the execution leaves the compiled code.

Back to the error message: eventually the free registers are running out because the compiler keeps the mapped 68k registers between instructions. I had to figure out some solution for enforcing releasing a previously mapped register when there are no more free registers left. Also, I had to make sure that I wouldn’t release the register that was allocated just recently and needed for the actual instruction compiling (or for the following instructions that might depend on the same 68k register).

This issue was resolved by some simple locking mechanism for the mapped registers: when a register is mapped then in the same time it is locked for the actual instruction. At the end of an instruction all mapped 68k registers are unlocked but not released.

On enforced releasing of the registers I look for an unlocked mapped register and release it.

But I start searching for the next unlocked register in a round-robin manner, to make sure I won’t release-allocate-release the same register all the time.

This system looks robust enough to handle the needs of the compiling.

Back to the lies… errr… benchmarks

As I already mentioned: in the implementation I reached the point when almost all instructions are implemented that are needed by the Mandelbrot test. So let’s see how the compiled code performs.

The test system consists of a Micro AmigaOne (G3/800 MHz), a slightly outdated AmigaOS4 version and my beloved Galaxy S2 who acts as a stopwatch. (Yes, I measure the time by using stopwatch. I didn’t want to spend too much effort on figuring out how to measure the time more precisely.)

Running the Mandelbrot test, I got the following results:

Interpretive: ~6 seconds,

JIT compiled: ~3 seconds.

Not too precise, but as it seems the JIT compiler is doing nice, the compiled code finished twice as fast as the interpretive.

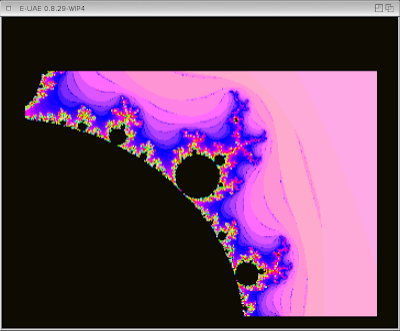

To confirm the results I created a new version of the Mandelbrot test that needs lot more processor power to complete. You can find it among the test kickstarts, it is called: mandel_though_hw.kick. The code is essentially the same as the previous Mandelbrot test, but I tweaked the parameters a bit: zoomed in and adjusted the drop out threshold, so the picture is more detailed.

|

| Honey! The Mandelbrot is almost done in the oven! |

The results are:

Interpretive: 108 seconds,

JIT compiled: 52 seconds.

Yay! It is indeed twice as fast! Rejoice!

Disclaimer

Why we must not jump to any conclusions just yet regarding the speed...

First of all, not all instructions are implemented yet. This most likely means more speed increase, because the JIT instructions are always faster or at least as fast as the interpretive implementation. When the emulation hits an not implemented instruction then it has to call the interpretive implementation, which means essentially storing all the emulated registers in the memory and restart the register mapping.

Also, the register flow optimization is not implemented yet, that will be certainly a big boost on the speed, it will eliminate lots of instructions from the compiled code.

On the other hand, this test relies more on the rather simple, mostly processing-demanding instructions. When the test code is doing much more memory accessing then the difference between the interpretive and the JIT compiled will be almost certainly much smaller.

Anyway, I hope I assured all of you that we are heading to the right direction.

Full speed ahead! (Don’t mind the mines.)